|

Earlier we used the loss functions algorithms manually and wrote them according to our problem but now libraries like PyTorch have made it easy for users to simply call the loss function by one line of code.

Loss functions are the mistakes done by machines if the prediction of the machine learning algorithm is further from the ground truth that means the Loss function is big, and now machines can improve their outputs by decreasing that loss function. Some designs are better than others.Have you ever wondered how we humans evolved so much? – because we learn from our mistakes and try to continuously improve ourselves on the basis of those mistakes now the same case is with machines, just like humans machines can also tend to learn from their mistakes but how? – In neural networks & AI, we always give freedom to algorithms to find the best prediction but one can not improve without comparing it with its previous mistakes, hence comes the Loss function in the picture.

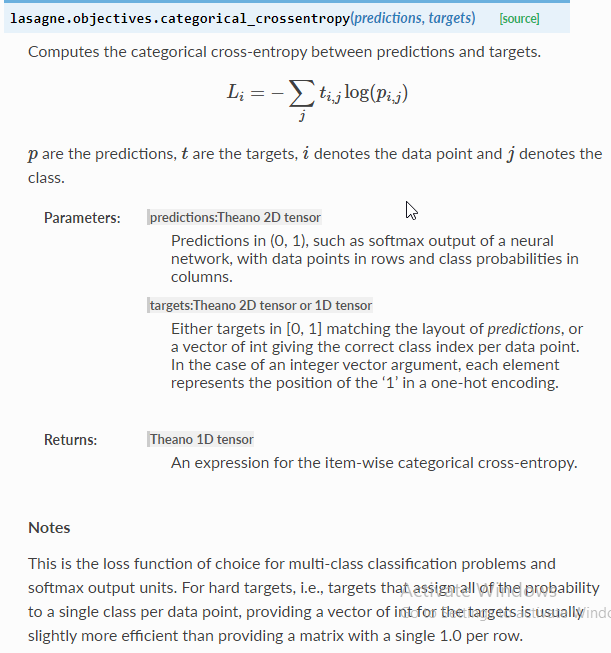

When designing a house, there are many alternatives. This applies only to multi-class classification - binary classification and regression problems have a different set of rules. To summarize, when designing a neural network multi-class classifier, you can you CrossEntropyLoss with no activation, or you can use NLLLoss with log-SoftMax activation.

With the older NLLLoss technique, the raw output values will be log of SoftMax so if you want to view probabilities you must apply the exp() function. When making a prediction, with the CrossEntropyLoss technique the raw output values will be logits so if you want to view probabilities you must apply SoftMax. When using the older approach for multi-class classification, you apply LogSoftmax to the output and NLLLoss assumes you’ve done so. In short, when using the newer and simpler approach for multi-class classification, you don’t apply any activation to the output and then CrossEntropyLoss applies log-SoftMax internally. Loss_func = T.nn.NLLLoss() # assumes LogSoftmax() applied # z = T.log_softmax(z, dim=1) # function instead of Module # z = T.log(T.softmax(z, dim=1)) # inefficient Self.apply_log_soft = T.nn.LogSoftmax(dim=1) # Module This CrossEntropyLoss with logits output (logits just means no activation applied) technique is really just wrapper code around the older NLLLoss with LogSoftmax technique. Optimizer = T.optim.SGD(net.parameters(), lr=lrn_rate) The results are identical.Ī possible 4-7-3 network definition, and associated training code looks like: The demo run on the right uses NLLLoss with LogSoftmax activation on the output nodes. The demo run on the left uses CrossEntropyLoss with no activation on the output nodes. The CrossEntropyLoss with logits approach is easier to implement and is by far the most common approach.

Suppose you are looking at the Iris Dataset, which has four predictor variables and three classes. If you are designing a neural network multi-class classifier using PyTorch, you can use cross entropy loss (torch.nn.CrossEntropyLoss) with logits output (no activation) in the forward() method, or you can use negative log-likelihood loss (torch.nn.NLLLoss) with log-softmax (torch.LogSoftmax() module or torch.log_softmax() funcction) in the forward() method.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed